师兄和同门在做SLAM的时候,经常会用到的 octomap ,也就是八叉树地图。octomap相比于点云地图来说大大减小了地图的存储空间。既然octomap可以用于导航和避障,那么自然也可以导入moveit!,作为机械臂路径规划过程中的障碍物,方便机械臂和障碍物之间进行碰撞检测。 这里首先要提一下Movelt!并没有整合了物体识别和环境建模这些模块,而是利用传感器采集的数据导入的。而我的想法就是通过双目相机的深度及RGB图像生成用于碰撞检测的 octomap,这些octomap也可以依据贝叶斯准则不断实时更新。这样机械臂就可以避开真实世界的障碍物了。

碰撞检测是运动规划的一大难题,我们需要对每个采样点做有效性判断。所以,运动规划需要提供一个高效的碰撞检测算法。虽然Movelt!需要从外部传感器获取点云或深度信息,但是碰撞检测的算法moveit!还是完美集成了FCL( Flexible Collision Library),可以非常快速地实现octomap的碰撞检测。这个算法在学习ROS的时候,好像就囫囵吞枣的使用过了,当时还是手动往moveit!中导入物体的model的。值得一提的是,对于场景中每个物体都进行碰撞检测是浪费时间的,于是我们在配置moveit!的时候曾经生成过ACM(Allowed Collision Matrix)来进行优化。

碰撞检测是运动规划的一大难题,我们需要对每个采样点做有效性判断。所以,运动规划需要提供一个高效的碰撞检测算法。虽然Movelt!需要从外部传感器获取点云或深度信息,但是碰撞检测的算法moveit!还是完美集成了FCL( Flexible Collision Library),可以非常快速地实现octomap的碰撞检测。这个算法在学习ROS的时候,好像就囫囵吞枣的使用过了,当时还是手动往moveit!中导入物体的model的。值得一提的是,对于场景中每个物体都进行碰撞检测是浪费时间的,于是我们在配置moveit!的时候曾经生成过ACM(Allowed Collision Matrix)来进行优化。

1.ROS中深度图像转换成点云地图PCL

如果不是在ROS中操作的话,需要安装PCL库,并进行一定的依赖安装和配置,可以参考如下官网连接https://github.com/OctoMap/octomap 和大佬博客 https://blog.csdn.net/LOVE1055259415/article/details/79911653 大佬提供都是通过读取.pcd文件然后转换成octomap并写入.ot等文件当中,对于咱ROS中的使用不太方便,需要通过ROS消息的方式订阅点云消息并转换成octomap然后发布导入moveit!中。而且我所使用的传感器是双目相机,最开始获取的数据是RGBD信息,所以首先需要的是深度图像转换成点云信息的方法。于是构建了一个depth2pointcloud包测试一下

#include <pcl/point_types.h>

#include <pcl/io/pcd_io.h>

#include <iostream>

#include <string>

#include <stdlib.h>

#include <stdio.h>

#include <sstream>

#include <vector>

#include <opencv2/opencv.hpp>

#include<ros/ros.h>

#include<pcl/point_cloud.h>

#include<pcl_conversions/pcl_conversions.h>

#include<sensor_msgs/PointCloud2.h>

using namespace cv;

using namespace std;

pcl::PointCloud<pcl::PointXYZRGB>::Ptr depth2cloud( cv::Mat rgb_image, cv::Mat depth_image )

{

# 相机参数

float f = 570.3;

float cx = 320.0, cy = 240.0;

pcl::PointCloud<pcl::PointXYZRGB>::Ptr cloud_ptr( new pcl::PointCloud<pcl::PointXYZRGB> () );

cloud_ptr->width = rgb_image.cols;

cloud_ptr->height = rgb_image.rows;

cloud_ptr->is_dense = false;

for ( int y = 0; y < rgb_image.rows; ++ y ) {

for ( int x = 0; x < rgb_image.cols; ++ x ) {

pcl::PointXYZRGB pt;

if ( depth_image.at<unsigned short>(y, x) != 0 )

{

pt.z = depth_image.at<unsigned short>(y, x)/1000.0;

pt.x = (x-cx)*pt.z/f;

pt.y = (y-cy)*pt.z/f;

pt.r = rgb_image.at<cv::Vec3b>(y, x)[2];

pt.g = rgb_image.at<cv::Vec3b>(y, x)[1];

pt.b = rgb_image.at<cv::Vec3b>(y, x)[0];

cloud_ptr->points.push_back( pt );

}

else

{

pt.z = 0;

pt.x = 0;

pt.y = 0;

pt.r = 0;

pt.g = 0;

pt.b = 0;

cloud_ptr->points.push_back( pt );

}

}

}

return cloud_ptr;

}

int main(int argc,char* argv[])

{

ros::init (argc, argv, "publish_depth");

ros::NodeHandle nh;

cv::Mat depth;

cv::Mat image;

image=cv::imread("/home/redwall/catkin_ws/src/depth2pointcloud/data/rgb_index/144.ppm");

depth=cv::imread("/home/redwall/catkin_ws/src/depth2pointcloud/data/dep_index/144.pgm",IMREAD_ANYDEPTH);

string pcdName("/home/redwall/catkin_ws/src/pointcloud2octomap/data/testpcd.pcd");

if(!image.data||!depth.data) // 判断图片调入是否成功

{

cout<< "no image"<<endl;

return -1; // 调入图片失败则退出

}

pcl::PointCloud<pcl::PointXYZRGB>::Ptr cloud(new pcl::PointCloud<pcl::PointXYZRGB>);

cloud=depth2cloud(image,depth);

pcl::io::savePCDFileASCII(pcdName,*cloud);

cout<<"successful"<<endl;

return 0;

}

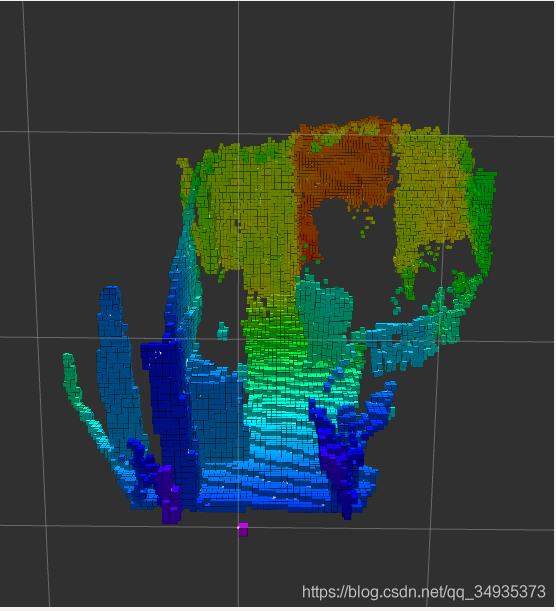

上述ROS包通过读取RGB图片和 深度图像,利用pcl库方法转换成 <PointXYZRGB>形式的点云文件,并写入pcd文件保存,然后通过大佬的方法转换成的octomap如下:

2.ROS中深度图像直接转换成octomap

既然测试完毕,那就可以直接通过depth转换成octomap了,上面使用的方法同时使用了RGBD,转换成PointXYZRGB,简单起见,可以舍弃RGB信息,仅使用深度图像来生成<PointXYZ>,然后生成octomap,详细请参考《PCL点云库》。 1.创建ROS包:depth2octomap 2.CMakeLists.txt和package.xml

cmake_minimum_required(VERSION 2.8.3)

project(depth2octomap)

find_package(catkin REQUIRED COMPONENTS

roscpp

rospy

std_msgs

rostime

sensor_msgs

message_filters

cv_bridge

image_transport

)

find_package(PCL REQUIRED)

find_package(OpenCV REQUIRED)

find_package(OpenMP)

catkin_package()

include_directories(${catkin_INCLUDE_DIRS} ${PCL_INCLUDE_DIRS} ${OPENCV_INCLUDE_DIRS})

add_executable (depth2octomap src/depth2octomap.cpp)

target_link_libraries (depth2octomap ${catkin_LIBRARIES} ${PCL_LIBRARIES} ${OpenCV_LIBS})

<?xml version="1.0"?>

<package>

<name>depth2octomap</name>

<version>0.0.0</version>

<description>The depth2octomap package</description>

<maintainer email="dyc@todo.todo">crp</maintainer>

<license>TODO</license>

<buildtool_depend>catkin</buildtool_depend>

<build_depend>roscpp</build_depend>

<build_depend>rospy</build_depend>

<build_depend>std_msgs</build_depend>

<build_depend>cv_bridge</build_depend>

<build_depend>image_transport</build_depend>

<run_depend>roscpp</run_depend>

<run_depend>rospy</run_depend>

<run_depend>std_msgs</run_depend>

<run_depend>cv_bridge</run_depend>

<run_depend>image_transport</run_depend>

<export>

</export>

</package>

3.src/depth2octomap.cpp

#include <ros/ros.h>

#include <image_transport/image_transport.h>

#include <opencv2/highgui/highgui.hpp>

#include <cv_bridge/cv_bridge.h>

#include <sensor_msgs/image_encodings.h>

#include <sensor_msgs/PointCloud2.h>

// PCL 库

#include <pcl/io/pcd_io.h>

#include <pcl/point_types.h>

#include <pcl/visualization/pcl_visualizer.h>

#include <pcl_conversions/pcl_conversions.h>

// 定义点云类型

typedef pcl::PointCloud<pcl::PointXYZRGB> PointCloud;

using namespace std;

using namespace cv;

// 相机内参

const double camera_factor = 1000;

const double camera_cx = 319.025;

const double camera_cy = 236.750;

const double camera_fx = 384.657;

const double camera_fy = 384.657;

// 全局变量:图像矩阵和点云

cv_bridge::CvImagePtr color_ptr, depth_ptr;

cv::Mat color_pic, depth_pic;

/*** RGB处理 ***/

void color_Callback(const sensor_msgs::ImageConstPtr& color_msg)

{

try

{

color_ptr = cv_bridge::toCvCopy(color_msg, sensor_msgs::image_encodings::BGR8);

cv::waitKey(1050); // 不断刷新图像,频率时间为int delay,单位为ms

}

catch (cv_bridge::Exception& e )

{

ROS_ERROR("Could not convert from '%s' to 'bgr8'.", color_msg->encoding.c_str());

}

color_pic = color_ptr->image;

}

/*** Depth处理 ***/

void depth_Callback(const sensor_msgs::ImageConstPtr& depth_msg)

{

try

{

depth_ptr = cv_bridge::toCvCopy(depth_msg, sensor_msgs::image_encodings::TYPE_32FC1);

cv::waitKey(1050);

}

catch (cv_bridge::Exception& e)

{

ROS_ERROR("Could not convert from '%s' to 'mono16'.", depth_msg->encoding.c_str());

}

depth_pic = depth_ptr->image;

}

int main(int argc, char **argv)

{

ros::init(argc, argv, "publish_octomap");

ros::NodeHandle nh;

image_transport::ImageTransport it(nh);

image_transport::Subscriber sub = it.subscribe("/camera/color/image_raw", 1, color_Callback);

image_transport::Subscriber sub1 = it.subscribe("/camera/depth/image_rect_raw", 1, depth_Callback);

ros::Publisher pointcloud_publisher = nh.advertise<sensor_msgs::PointCloud2>("/pointcloud/output", 1);

// 点云变量

PointCloud::Ptr cloud ( new PointCloud );

sensor_msgs::PointCloud2 pub_pointcloud;

double sample_rate = 1.0; // 1HZ

ros::Rate naptime(sample_rate); // use to regulate loop rate

while (ros::ok()) {

// 遍历深度图

for (int m = 0; m < depth_pic.rows; m++){

for (int n = 0; n < depth_pic.cols; n++){

// 获取深度图中(m,n)处的值

float d = depth_pic.ptr<float>(m)[n];//ushort d = depth_pic.ptr<ushort>(m)[n];

// d 可能没有值,若如此,跳过此点

if (d == 0)

continue;

// d 存在值,则向点云增加一个点

pcl::PointXYZRGB p;

// 计算这个点的空间坐标

// p.z = double(d) / camera_factor;

// p.x = (n - camera_cx) * p.z / camera_fx;

// p.y = (m - camera_cy) * p.z / camera_fy;

// 相机模型是垂直的

p.x = double(d) / camera_factor;

p.y = -(n - camera_cx) * p.x / camera_fx;

p.z = -(m - camera_cy) * p.x / camera_fy;

// 从rgb图像中获取它的颜色

// rgb是三通道的BGR格式图,所以按下面的顺序获取颜色

p.b = color_pic.ptr<uchar>(m)[n*3];

p.g = color_pic.ptr<uchar>(m)[n*3+1];

p.r = color_pic.ptr<uchar>(m)[n*3+2];

// 把p加入到点云中

cloud->points.push_back( p );

}

}

// 设置并保存点云

cloud->height = 1;

cloud->width = cloud->points.size();

ROS_INFO("point cloud size = %d",cloud->width);

cloud->is_dense = false;// 转换点云的数据类型

pcl::toROSMsg(*cloud,pub_pointcloud);

pub_pointcloud.header.frame_id = "camera_link";

pub_pointcloud.header.stamp = ros::Time::now();

// 发布合成点云

pointcloud_publisher.publish(pub_pointcloud);

// 清除数据并退出

cloud->points.clear();

ros::spinOnce(); //allow data update from callback;

naptime.sleep(); // wait for remainder of specified period;

}

}

4.上面的depth2octomap节点已经将深度转换成点云了,至于怎么转换成octomap还需要通过launch来启动octomap_server节点:octomaptransform.launch

<launch>

<node name="depth2octomap" pkg="depth2octomap" type="depth2octomap"/>

<node pkg="octomap_server" type="octomap_server_node" name="octomap_server">

<!-- resolution in meters per pixel -->

<param name="resolution" value="0.05" />

<!-- name of the fixed frame, needs to be "/map" for SLAM -->

<param name="frame_id" type="string" value="camera_link" />

<!-- max range / depth resolution of the kinect in meter -->

<param name="sensor_model/max_range" value="100.0" />

<param name="latch" value="true" />

<!-- max/min height for occupancy map, should be in meters -->

<param name="pointcloud_max_z" value="100" />

<param name="pointcloud_min_z" value="-100" />

<!-- topic from where pointcloud2 messages are subscribed -->

<remap from="/cloud_in" to="/pointcloud/output" />

</node>

</launch>

3.Realsense深度转octomap

刚写这篇博客的时候,还没有相机在手上,结果刚过两天,老板的realsense D435就发货了,花了两天时间在ROS中完成了相机的启动,https://blog.csdn.net/qq_34935373/article/details/104891420 ,通过相机发布RGBD信息,然后用上面的包转换成octomap,其中还有一些细节需要关注: 1.相机参数矩阵获取:fx、fy、cx、cy,realsense提供了获取这些参数的API,可以查看出厂的标定参数https://github.com/IntelRealSense/librealsense/issues/5239 2.计算点云在空间中的坐标的时候,因为realsense发布的带模型的launch的时候,由于模型在RViz中式水平放置的,所以坐标需要调整成x方向,而不是原本的z方向,因此就有如下调整: // 计算这个点的空间坐标 //p.z = double(d) / camera_factor; //p.x = (n – camera_cx) * p.z / camera_fx; //p.y = (m – camera_cy) * p.z / camera_fy; // 相机模型是垂直的 p.x = double(d) / camera_factor; p.y = -(n – camera_cx) * p.x / camera_fx; p.z = -(m – camera_cy) * p.x / camera_fy; 3.通过配置octosever修改点云空间范围

<param name="pointcloud_max_z" value="100" />

<param name="pointcloud_min_z" value="-100" />

# 启动相机节点,发布RGBD信息

$ roslaunch realsense2_camera rs_camera.launch

# 订阅RGBD信息,转换成点云并发布成octomap

$ roslaunch depth2octomap octomaptransform.launch

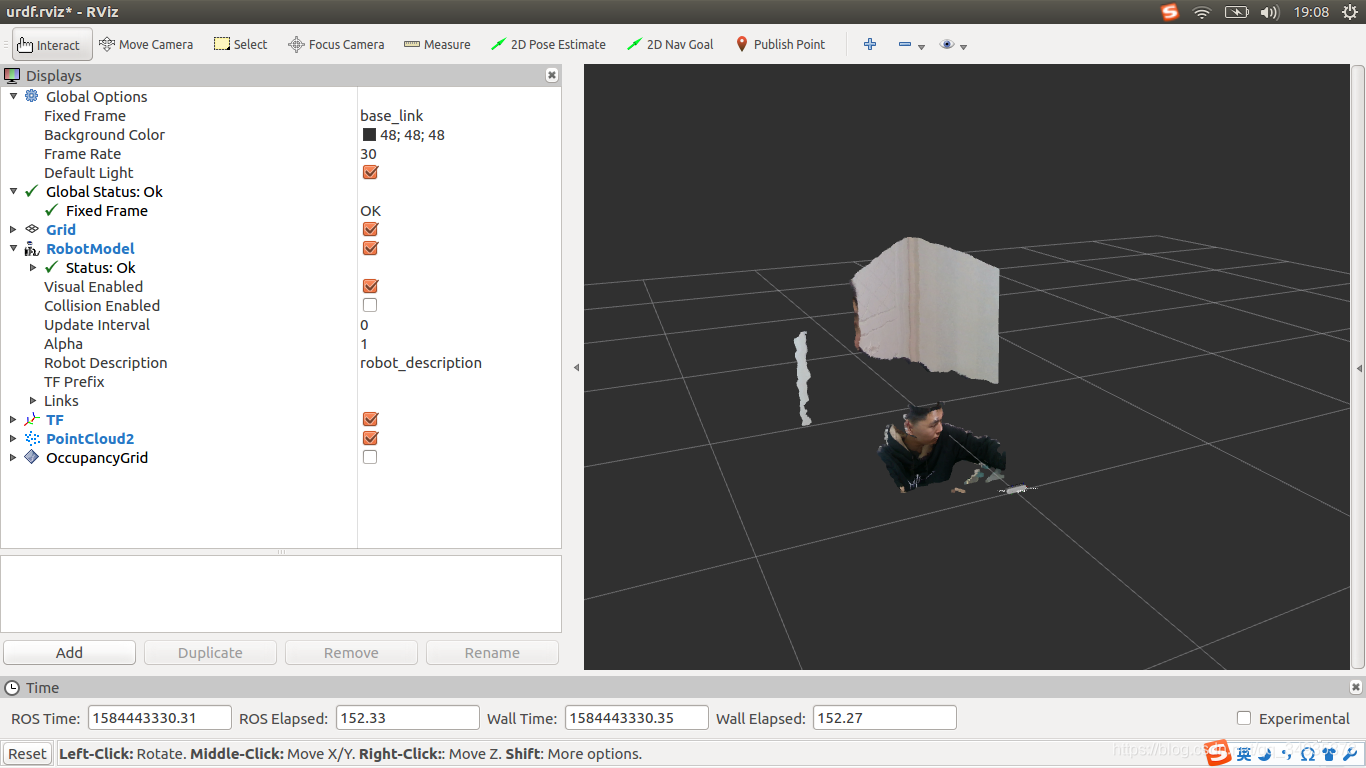

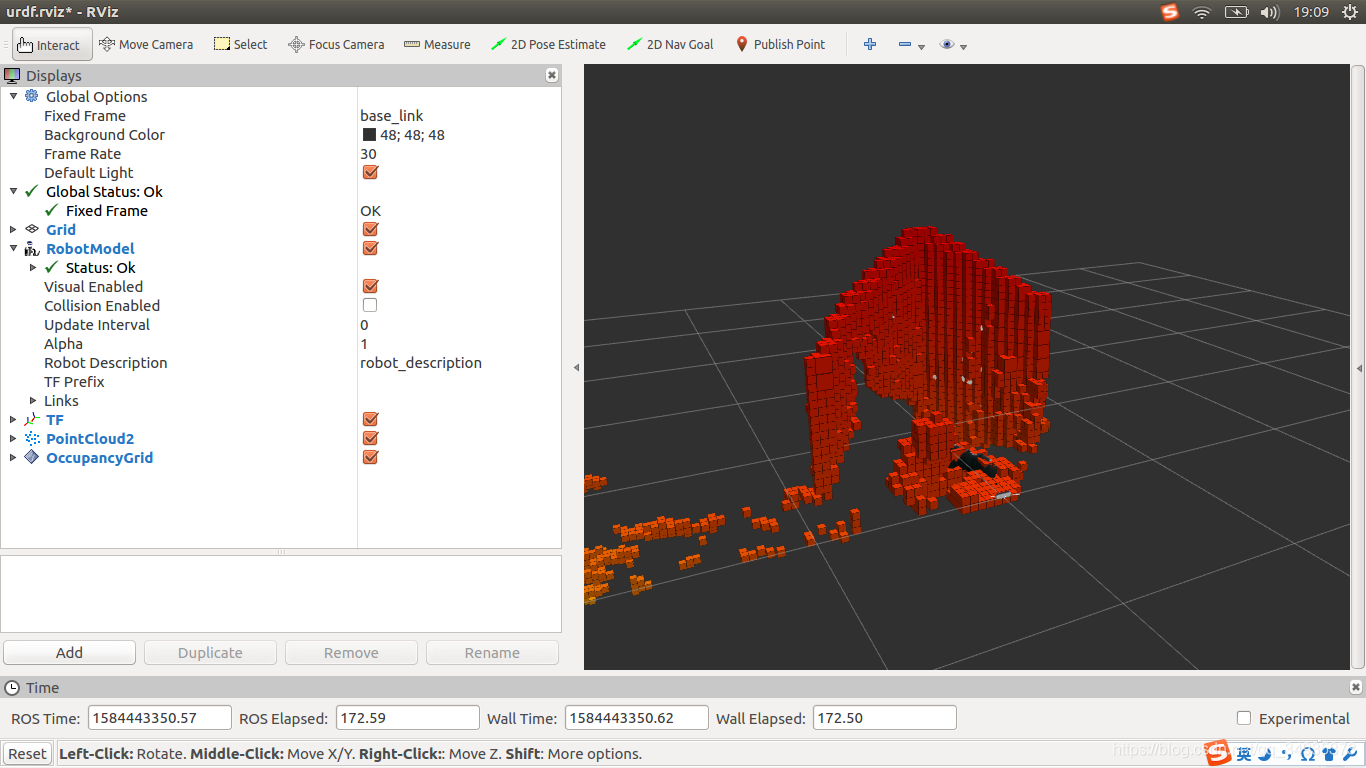

下面两幅图分别是点云图和八叉树图:

4.TODO

当然,在获取点云数据时 ,由于设备精度,操作者经验环境因素带来的影响,被测物体表面性质变化和数据拼接配准操作过程的影响,点云数据中讲不可避免的出现一些噪声。所以还需要在上述文件中根据需要加上点云滤波,离群点舍弃等操作。参考大神:https://blog.csdn.net/qq_34719188/article/details/79179430

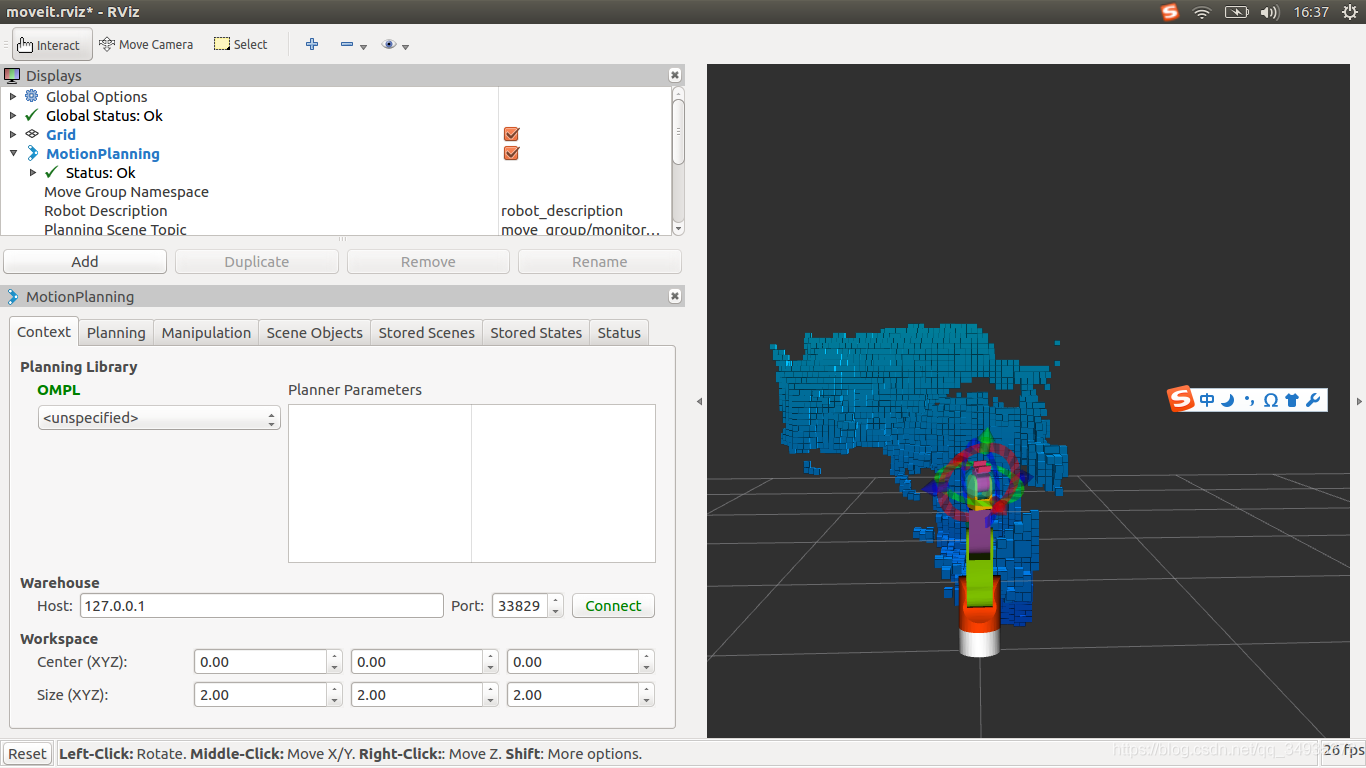

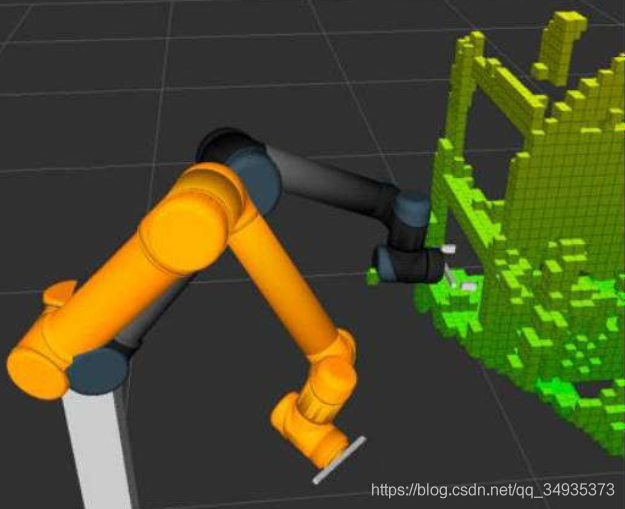

Moveit!通过场景规划Planning_scene导入octomap

和之前的方法差不多类似,只不过应用的场景变成了moveit!当中,通过使用深度相机感知环境数据,感知后的环境可以作为环境障碍物,机械臂规划路径时会主动避开有octomap地图的地方。官网配置 http://docs.ros.org/indigo/api/pr2_moveit_tutorials/html/planning/src/doc/perception_configuration.html,其中用到了moveit的组件 Occupancy Map Updater,Occupancy Map Updater 接受两种数据:

- The PointCloud Occupany Map Updater: which can take as input point clouds (sensor_msgs/PointCloud2)

- The Depth Image Occupancy Map Updater: which can take as input Depth Images (sensor_msgs/Image)

1.和之前配置moveit控制器的时候的套路是类似的,找到redwall_arm_moveit_config包中的config文件夹(官方使用的是PR2包,或者直接使用自己的包),新建一个sensors_3d.yaml(文件名随意),写入如下内容:

# The name of this file shouldn't be changed, or else the Setup Assistant won't detect it

sensors:

- sensor_plugin: occupancy_map_monitor/PointCloudOctomapUpdater

point_cloud_topic: /camera/depth/color/points

max_range: 5.0

frame_subsample: 1

point_subsample: 1

2021,1月更新:上面的sensor配置可以展示出效果,但是会出现一个问题,那就是八叉树地图将机械臂的位置也当成了障碍物,所以应该设定一个机械臂的范围,修改的配置如下:

sensors:

- sensor_plugin: occupancy_map_monitor/PointCloudOctomapUpdater

point_cloud_topic: /camera/depth/color/points

max_range: 5.0

frame_subsample: 1

point_subsample: 1

padding_offset: 0.1

padding_scale: 1.0

max_update_rate: 1.0

filtered_cloud_topic: filtered_cloud

和官方的配置有所不同,因为我是用的是realsense深度传感器,可在话题/camera/depth/color/points上发布点云信息,如果你使用的是其他公司的RGBD相机,可用参考官方的深度相机配置方法:

sensors:

- sensor_plugin: occupancy_map_monitor/DepthImageOctomapUpdater

image_topic: /head_mount_kinect/depth_registered/image_raw

queue_size: 5

near_clipping_plane_distance: 0.3

far_clipping_plane_distance: 5.0

shadow_threshold: 0.2

padding_scale: 4.0

padding_offset: 0.03

filtered_cloud_topic: filtered_cloud

2.填写launch文件夹下的redwall_arm_moveit_sensor_manager.launch.xml,在launch目录下,有两个与sensor相关的launch文件,一个是sensor_manager.launch.xml,该文件是moveit生成配置文件后自动生成好的。另一个是XXX_moveit_sensor_manager.launch.xml文件,这也是自动生成好的,只不过是个空的launch文件,需要填写以下内容。

<launch>

<param name="camera_link" type="string" value="odom_combined" />

<param name="octomap_resolution" type="double" value="0.05" />

<param name="max_range" type="double" value="5.0" />

<rosparam command="load" file="$(find redwall_arm_moveit_config)/config/sensors_3d.yaml" />

</launch>

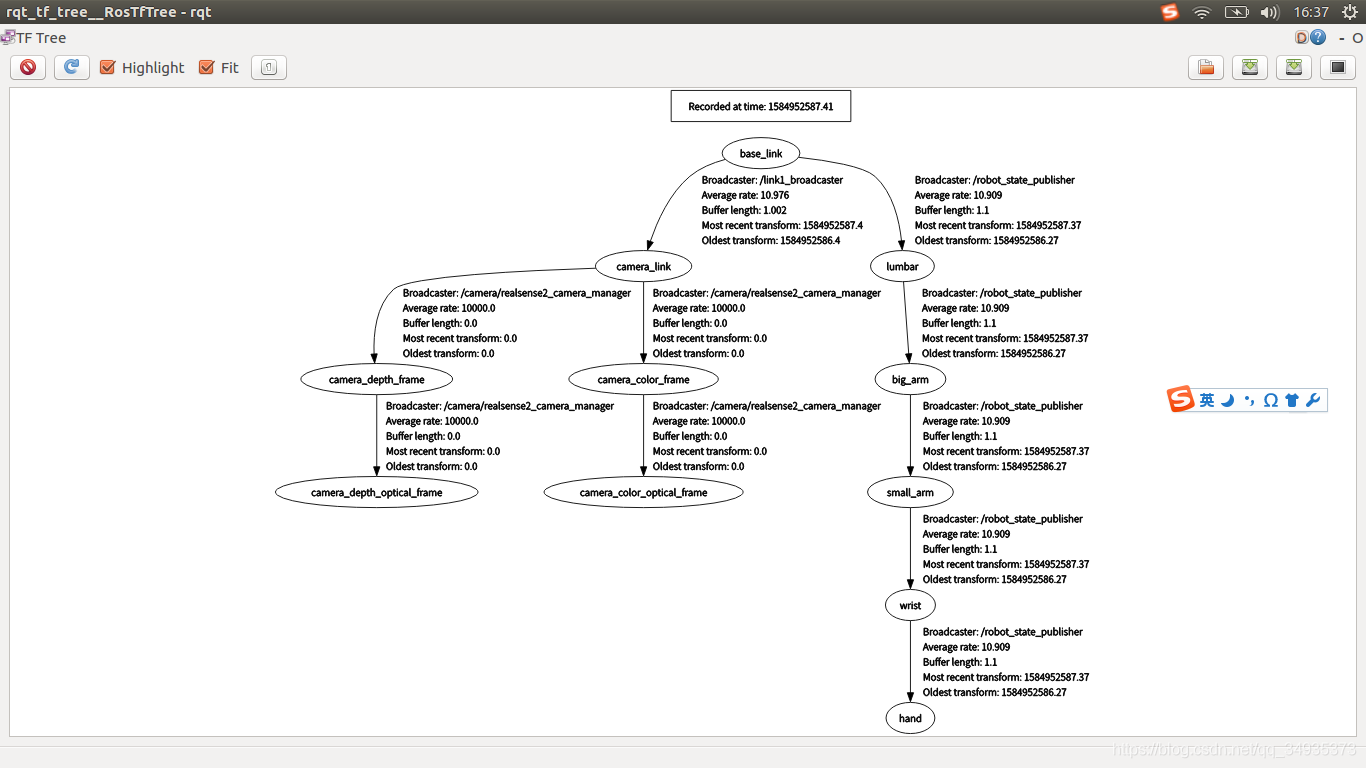

第一个参数是你想要将八叉树地图发布在那个坐标系,我的发布在camera_link,其余两个参数就是和八叉树地图的分辨率有关了,据说这个分辨率太大,可能都是地图无法加载显示,反正我没有遇到这个问题。最后一个参数加载的就是上面配置的sensors_3d.yaml了。 3.由于现在还是在疫情阶段,还没有进行手眼标定,无法确定相机坐标系和世界坐标系的坐标变换,所以简单修改realsense包中的demo_pointcloud.launch文件,将RViz注释掉,添加一个camera_link到base_link的静态坐标变换。

<launch>

<arg name="serial_no" default=""/>

<arg name="json_file_path" default=""/>

<arg name="camera" default="camera"/>

<group ns="$(arg camera)">

<include file="$(find realsense2_camera)/launch/includes/nodelet.launch.xml">

<arg name="serial_no" value="$(arg serial_no)"/>

<arg name="json_file_path" value="$(arg json_file_path)"/>

<arg name="depth_width" value="640"/>

<arg name="depth_height" value="480"/>

<arg name="depth_fps" value="30"/>

<arg name="color_width" value="640"/>

<arg name="color_height" value="480"/>

<arg name="color_fps" value="30"/>

<arg name="enable_depth" value="true"/>

<arg name="enable_color" value="true"/>

<arg name="enable_infra1" value="false"/>

<arg name="enable_infra2" value="false"/>

<arg name="enable_fisheye" value="false"/>

<arg name="enable_gyro" value="false"/>

<arg name="enable_accel" value="false"/>

<arg name="enable_pointcloud" value="true"/>

<arg name="enable_sync" value="true"/>

<arg name="tf_prefix" value="$(arg camera)"/>

</include>

<!-- <node name="rviz" pkg="rviz" type="rviz" args="-d $(find realsense2_camera)/rviz/pointcloud.rviz" required="true" />-->

</group>

<node pkg="tf" type="static_transform_publisher" name="link1_broadcaster" args="1 0 0 0 0 0 1 base_link camera_link 100" />

</launch>

4.接下来就是启动节点,连上深度相机,查看效果了:

# 启动相机节点发布点云数据

$ roslaunch realsense2_camera demo_pointcloud.launch

# 启动moveit

$ roslaunch redwall_arm_moveit_config demo.launch

# 查看坐标变换

$ rosrun rqt_tf_tree rqt_tf_tree