Kubernetes K8S之kube-prometheus概述与部署

主机配置规划

| 服务器名称(hostname) | 系统版本 | 配置 | 内网IP | 外网IP(模拟) |

|---|---|---|---|---|

| k8s-master | CentOS7.7 | 2C/4G/20G | 172.16.1.110 | 10.0.0.110 |

| k8s-node01 | CentOS7.7 | 2C/4G/20G | 172.16.1.111 | 10.0.0.111 |

| k8s-node02 | CentOS7.7 | 2C/4G/20G | 172.16.1.112 | 10.0.0.112 |

prometheus概述

Prometheus是一个开源的系统监控和警报工具包,自2012成立以来,许多公司和组织采用了Prometheus。它现在是一个独立的开源项目,并独立于任何公司维护。在2016年,Prometheus加入云计算基金会作为Kubernetes之后的第二托管项目。

Prometheus性能也足够支撑上万台规模的集群。

Prometheus的关键特性

- 多维度数据模型

- 灵活的查询语言

- 不依赖于分布式存储;单服务器节点是自治的

- 通过基于HTTP的pull方式采集时序数据

- 可以通过中间网关进行时序列数据推送

- 通过服务发现或者静态配置来发现目标服务对象

- 支持多种多样的图表和界面展示,比如Grafana等

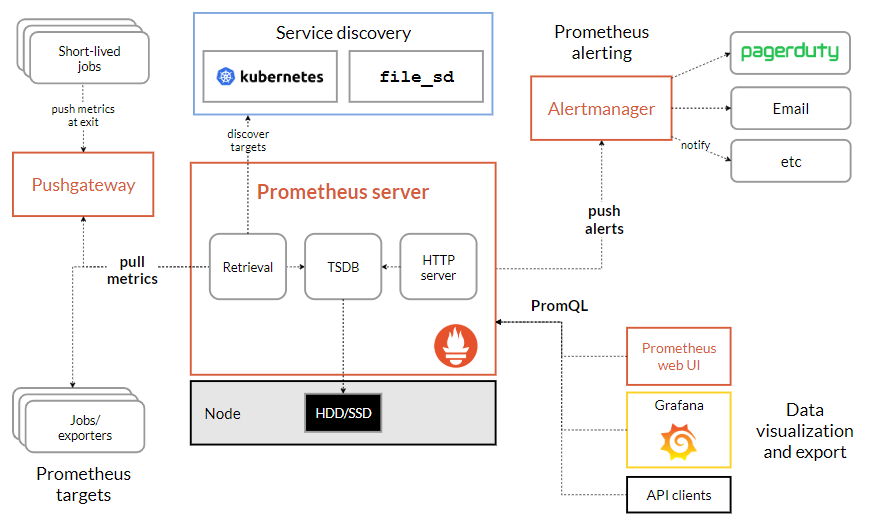

架构图

基本原理

Prometheus的基本原理是通过HTTP协议周期性抓取被监控组件的状态,任意组件只要提供对应的HTTP接口就可以接入监控。不需要任何SDK或者其他的集成过程。

这样做非常适合做虚拟化环境监控系统,比如VM、Docker、Kubernetes等。输出被监控组件信息的HTTP接口被叫做exporter 。目前互联网公司常用的组件大部分都有exporter可以直接使用,比如Varnish、Haproxy、Nginx、MySQL、Linux系统信息(包括磁盘、内存、CPU、网络等等)。

Prometheus三大套件

- Server 主要负责数据采集和存储,提供PromQL查询语言的支持。

- Alertmanager 警告管理器,用来进行报警。

- Push Gateway 支持临时性Job主动推送指标的中间网关。

服务过程

- Prometheus Daemon负责定时去目标上抓取metrics(指标)数据,每个抓取目标需要暴露一个http服务的接口给它定时抓取。Prometheus支持通过配置文件、文本文件、Zookeeper、Consul、DNS SRV Lookup等方式指定抓取目标。Prometheus采用PULL的方式进行监控,即服务器可以直接通过目标PULL数据或者间接地通过中间网关来Push数据。

- Prometheus在本地存储抓取的所有数据,并通过一定规则进行清理和整理数据,并把得到的结果存储到新的时间序列中。

- Prometheus通过PromQL和其他API可视化地展示收集的数据。Prometheus支持很多方式的图表可视化,例如Grafana、自带的Promdash以及自身提供的模版引擎等等。Prometheus还提供HTTP API的查询方式,自定义所需要的输出。

- PushGateway支持Client主动推送metrics到PushGateway,而Prometheus只是定时去Gateway上抓取数据。

- Alertmanager是独立于Prometheus的一个组件,可以支持Prometheus的查询语句,提供十分灵活的报警方式。

kube-prometheus部署

kube-prometheus的GitHub地址:

https://github.com/coreos/kube-prometheus/

本次我们选择release-0.2版本,而不是其他版本。

kube-prometheus下载与配置修改

下载

1 [root@k8s-master prometheus]# pwd 2 /root/k8s_practice/prometheus 3 [root@k8s-master prometheus]# 4 [root@k8s-master prometheus]# wget https://github.com/coreos/kube-prometheus/archive/v0.2.0.tar.gz 5 [root@k8s-master prometheus]# tar xf v0.2.0.tar.gz 6 [root@k8s-master prometheus]# ll 7 total 432 8 drwxrwxr-x 10 root root 4096 Sep 13 2019 kube-prometheus-0.2.0 9 -rw-r--r-- 1 root root 200048 Jul 19 11:41 v0.2.0.tar.gz

配置修改

1 # 当前所在目录 2 [root@k8s-master manifests]# pwd 3 /root/k8s_practice/prometheus/kube-prometheus-0.2.0/manifests 4 [root@k8s-master manifests]# 5 # 配置修改1 6 [root@k8s-master manifests]# vim grafana-service.yaml 7 apiVersion: v1 8 kind: Service 9 metadata: 10 labels: 11 app: grafana 12 name: grafana 13 namespace: monitoring 14 spec: 15 type: NodePort # 添加内容 16 ports: 17 - name: http 18 port: 3000 19 targetPort: http 20 nodePort: 30100 # 添加内容 21 selector: 22 app: grafana 23 [root@k8s-master manifests]# 24 # 配置修改2 25 [root@k8s-master manifests]# vim prometheus-service.yaml 26 apiVersion: v1 27 kind: Service 28 metadata: 29 labels: 30 prometheus: k8s 31 name: prometheus-k8s 32 namespace: monitoring 33 spec: 34 type: NodePort # 添加内容 35 ports: 36 - name: web 37 port: 9090 38 targetPort: web 39 nodePort: 30200 # 添加内容 40 selector: 41 app: prometheus 42 prometheus: k8s 43 sessionAffinity: ClientIP 44 [root@k8s-master manifests]# 45 # 配置修改3 46 [root@k8s-master manifests]# vim alertmanager-service.yaml 47 apiVersion: v1 48 kind: Service 49 metadata: 50 labels: 51 alertmanager: main 52 name: alertmanager-main 53 namespace: monitoring 54 spec: 55 type: NodePort # 添加内容 56 ports: 57 - name: web 58 port: 9093 59 targetPort: web 60 nodePort: 30300 # 添加内容 61 selector: 62 alertmanager: main 63 app: alertmanager 64 sessionAffinity: ClientIP 65 [root@k8s-master manifests]# 66 # 配置修改4 67 [root@k8s-master manifests]# vim grafana-deployment.yaml 68 # 将apps/v1beta2 改为 apps/v1 69 apiVersion: apps/v1 70 kind: Deployment 71 metadata: 72 labels: 73 app: grafana 74 name: grafana 75 namespace: monitoring 76 spec: 77 replicas: 1 78 selector: 79 ………………

kube-prometheus镜像版本查看与下载

由于镜像都在国外,因此经常会下载失败。为了快速下载镜像,这里我们下载国内的镜像,然后tag为配置文件中的国外镜像名即可。

查看kube-prometheus的镜像信息

1 # 当前工作目录 2 [root@k8s-master manifests]# pwd 3 /root/k8s_practice/prometheus/kube-prometheus-0.2.0/manifests 4 [root@k8s-master manifests]# 5 # 所有镜像信息如下 6 [root@k8s-master manifests]# grep -riE 'quay.io|k8s.gcr|grafana/' * 7 0prometheus-operator-deployment.yaml: - --config-reloader-image=quay.io/coreos/configmap-reload:v0.0.1 8 0prometheus-operator-deployment.yaml: - --prometheus-config-reloader=quay.io/coreos/prometheus-config-reloader:v0.33.0 9 0prometheus-operator-deployment.yaml: image: quay.io/coreos/prometheus-operator:v0.33.0 10 alertmanager-alertmanager.yaml: baseImage: quay.io/prometheus/alertmanager 11 grafana-deployment.yaml: - image: grafana/grafana:6.2.2 12 kube-state-metrics-deployment.yaml: image: quay.io/coreos/kube-rbac-proxy:v0.4.1 13 kube-state-metrics-deployment.yaml: image: quay.io/coreos/kube-rbac-proxy:v0.4.1 14 kube-state-metrics-deployment.yaml: image: quay.io/coreos/kube-state-metrics:v1.7.2 15 kube-state-metrics-deployment.yaml: image: k8s.gcr.io/addon-resizer:1.8.4 16 node-exporter-daemonset.yaml: image: quay.io/prometheus/node-exporter:v0.18.1 17 node-exporter-daemonset.yaml: image: quay.io/coreos/kube-rbac-proxy:v0.4.1 18 prometheus-adapter-deployment.yaml: image: quay.io/coreos/k8s-prometheus-adapter-amd64:v0.4.1 19 prometheus-prometheus.yaml: baseImage: quay.io/prometheus/prometheus 20 ##### 由上可知alertmanager和prometheus的镜像版本未显示 21 ### 获取alertmanager镜像版本信息 22 [root@k8s-master manifests]# cat alertmanager-alertmanager.yaml 23 apiVersion: monitoring.coreos.com/v1 24 kind: Alertmanager 25 metadata: 26 labels: 27 alertmanager: main 28 name: main 29 namespace: monitoring 30 spec: 31 baseImage: quay.io/prometheus/alertmanager 32 nodeSelector: 33 kubernetes.io/os: linux 34 replicas: 3 35 securityContext: 36 fsGroup: 2000 37 runAsNonRoot: true 38 runAsUser: 1000 39 serviceAccountName: alertmanager-main 40 version: v0.18.0 41 ##### 由上可见alertmanager的镜像版本为v0.18.0 42 ### 获取prometheus镜像版本信息 43 [root@k8s-master manifests]# cat prometheus-prometheus.yaml 44 apiVersion: monitoring.coreos.com/v1 45 kind: Prometheus 46 metadata: 47 labels: 48 prometheus: k8s 49 name: k8s 50 namespace: monitoring 51 spec: 52 alerting: 53 alertmanagers: 54 - name: alertmanager-main 55 namespace: monitoring 56 port: web 57 baseImage: quay.io/prometheus/prometheus 58 nodeSelector: 59 kubernetes.io/os: linux 60 podMonitorSelector: {} 61 replicas: 2 62 resources: 63 requests: 64 memory: 400Mi 65 ruleSelector: 66 matchLabels: 67 prometheus: k8s 68 role: alert-rules 69 securityContext: 70 fsGroup: 2000 71 runAsNonRoot: true 72 runAsUser: 1000 73 serviceAccountName: prometheus-k8s 74 serviceMonitorNamespaceSelector: {} 75 serviceMonitorSelector: {} 76 version: v2.11.0 77 ##### 由上可见prometheus的镜像版本为v2.11.0

执行脚本:镜像下载并重命名【集群所有机器执行】

1 [root@k8s-master software]# vim download_prometheus_image.sh 2 #!/bin/sh 3 4 ##### 在 master 节点和 worker 节点都要执行 【所有机器执行】 5 6 # 加载环境变量 7 . /etc/profile 8 . /etc/bashrc 9 10 ############################################### 11 # 从国内下载 prometheus 所需镜像,并对镜像重命名 12 src_registry="registry.cn-beijing.aliyuncs.com/cloud_registry" 13 # 定义镜像集合数组 14 images=( 15 kube-rbac-proxy:v0.4.1 16 kube-state-metrics:v1.7.2 17 k8s-prometheus-adapter-amd64:v0.4.1 18 configmap-reload:v0.0.1 19 prometheus-config-reloader:v0.33.0 20 prometheus-operator:v0.33.0 21 ) 22 # 循环从国内获取的Docker镜像 23 for img in ${images[@]}; 24 do 25 # 从国内源下载镜像 26 docker pull ${src_registry}/$img 27 # 改变镜像名称 28 docker tag ${src_registry}/$img quay.io/coreos/$img 29 # 删除源始镜像 30 docker rmi ${src_registry}/$img 31 # 打印分割线 32 echo "======== $img download OK ========" 33 done 34 35 ##### 其他镜像下载 36 image_name="alertmanager:v0.18.0" 37 docker pull ${src_registry}/${image_name} && docker tag ${src_registry}/${image_name} quay.io/prometheus/${image_name} && docker rmi ${src_registry}/${image_name} 38 echo "======== ${image_name} download OK ========" 39 40 image_name="node-exporter:v0.18.1" 41 docker pull ${src_registry}/${image_name} && docker tag ${src_registry}/${image_name} quay.io/prometheus/${image_name} && docker rmi ${src_registry}/${image_name} 42 echo "======== ${image_name} download OK ========" 43 44 image_name="prometheus:v2.11.0" 45 docker pull ${src_registry}/${image_name} && docker tag ${src_registry}/${image_name} quay.io/prometheus/${image_name} && docker rmi ${src_registry}/${image_name} 46 echo "======== ${image_name} download OK ========" 47 48 image_name="grafana:6.2.2" 49 docker pull ${src_registry}/${image_name} && docker tag ${src_registry}/${image_name} grafana/${image_name} && docker rmi ${src_registry}/${image_name} 50 echo "======== ${image_name} download OK ========" 51 52 image_name="addon-resizer:1.8.4" 53 docker pull ${src_registry}/${image_name} && docker tag ${src_registry}/${image_name} k8s.gcr.io/${image_name} && docker rmi ${src_registry}/${image_name} 54 echo "======== ${image_name} download OK ========" 55 56 57 echo "********** prometheus docker images OK! **********"

执行脚本后得到如下镜像

1 [root@k8s-master software]# docker images | grep 'quay.io/coreos' 2 quay.io/coreos/kube-rbac-proxy v0.4.1 a9d1a87e4379 6 days ago 41.3MB 3 quay.io/coreos/flannel v0.12.0-amd64 4e9f801d2217 4 months ago 52.8MB ## 之前已存在 4 quay.io/coreos/kube-state-metrics v1.7.2 3fd71b84d250 6 months ago 33.1MB 5 quay.io/coreos/prometheus-config-reloader v0.33.0 64751efb2200 8 months ago 17.6MB 6 quay.io/coreos/prometheus-operator v0.33.0 8f2f814d33e1 8 months ago 42.1MB 7 quay.io/coreos/k8s-prometheus-adapter-amd64 v0.4.1 5f0fc84e586c 15 months ago 60.7MB 8 quay.io/coreos/configmap-reload v0.0.1 3129a2ca29d7 3 years ago 4.79MB 9 [root@k8s-master software]# 10 [root@k8s-master software]# docker images | grep 'quay.io/prometheus' 11 quay.io/prometheus/node-exporter v0.18.1 d7707e6f5e95 11 days ago 22.9MB 12 quay.io/prometheus/prometheus v2.11.0 de242295e225 2 months ago 126MB 13 quay.io/prometheus/alertmanager v0.18.0 30594e96cbe8 10 months ago 51.9MB 14 [root@k8s-master software]# 15 [root@k8s-master software]# docker images | grep 'grafana' 16 grafana/grafana 6.2.2 a532fe3b344a 9 months ago 248MB 17 [root@k8s-node01 software]# 18 [root@k8s-node01 software]# docker images | grep 'addon-resizer' 19 k8s.gcr.io/addon-resizer 1.8.4 5ec630648120 20 months ago 38.3MB

kube-prometheus启动

启动prometheus

1 [root@k8s-master kube-prometheus-0.2.0]# pwd 2 /root/k8s_practice/prometheus/kube-prometheus-0.2.0 3 [root@k8s-master kube-prometheus-0.2.0]# 4 ### 如果出现异常,可以再重复执行一次或多次 5 [root@k8s-master kube-prometheus-0.2.0]# kubectl apply -f manifests/

启动后svc与pod状态查看

1 [root@k8s-master ~]# kubectl top node 2 NAME CPU(cores) CPU% MEMORY(bytes) MEMORY% 3 k8s-master 152m 7% 1311Mi 35% 4 k8s-node01 100m 5% 928Mi 54% 5 k8s-node02 93m 4% 979Mi 56% 6 [root@k8s-master ~]# 7 [root@k8s-master ~]# kubectl get svc -n monitoring 8 NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE 9 alertmanager-main NodePort 10.97.249.249 <none> 9093:30300/TCP 7m21s 10 alertmanager-operated ClusterIP None <none> 9093/TCP,9094/TCP,9094/UDP 7m13s 11 grafana NodePort 10.101.183.103 <none> 3000:30100/TCP 7m20s 12 kube-state-metrics ClusterIP None <none> 8443/TCP,9443/TCP 7m20s 13 node-exporter ClusterIP None <none> 9100/TCP 7m20s 14 prometheus-adapter ClusterIP 10.105.174.86 <none> 443/TCP 7m19s 15 prometheus-k8s NodePort 10.109.179.233 <none> 9090:30200/TCP 7m19s 16 prometheus-operated ClusterIP None <none> 9090/TCP 7m3s 17 prometheus-operator ClusterIP None <none> 8080/TCP 7m21s 18 [root@k8s-master ~]# 19 [root@k8s-master ~]# kubectl get pod -n monitoring -o wide 20 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES 21 alertmanager-main-0 2/2 Running 0 2m11s 10.244.4.164 k8s-node01 <none> <none> 22 alertmanager-main-1 2/2 Running 0 2m11s 10.244.2.225 k8s-node02 <none> <none> 23 alertmanager-main-2 2/2 Running 0 2m11s 10.244.4.163 k8s-node01 <none> <none> 24 grafana-5cd56df4cd-6d75r 1/1 Running 0 29s 10.244.2.227 k8s-node02 <none> <none> 25 kube-state-metrics-7d4bb66d8d-gx7w4 4/4 Running 0 2m18s 10.244.2.223 k8s-node02 <none> <none> 26 node-exporter-pl47v 2/2 Running 0 2m17s 172.16.1.110 k8s-master <none> <none> 27 node-exporter-tmmbw 2/2 Running 0 2m17s 172.16.1.111 k8s-node01 <none> <none> 28 node-exporter-w8wd9 2/2 Running 0 2m17s 172.16.1.112 k8s-node02 <none> <none> 29 prometheus-adapter-c676d8764-phj69 1/1 Running 0 2m17s 10.244.2.224 k8s-node02 <none> <none> 30 prometheus-k8s-0 3/3 Running 1 2m1s 10.244.2.226 k8s-node02 <none> <none> 31 prometheus-k8s-1 3/3 Running 0 2m1s 10.244.4.165 k8s-node01 <none> <none> 32 prometheus-operator-7559d67ff-lk86l 1/1 Running 0 2m18s 10.244.4.162 k8s-node01 <none> <none>

kube-prometheus访问

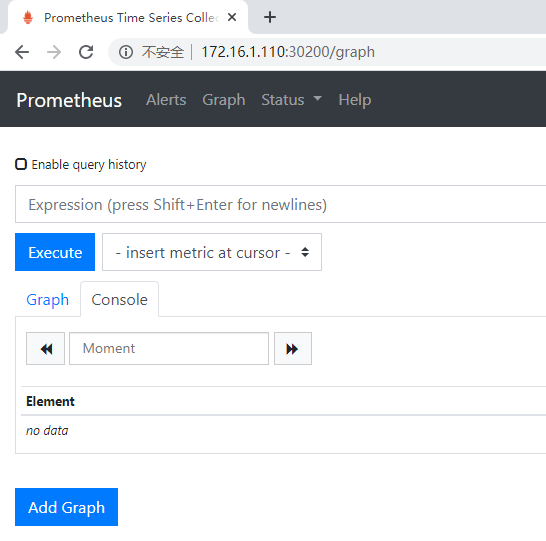

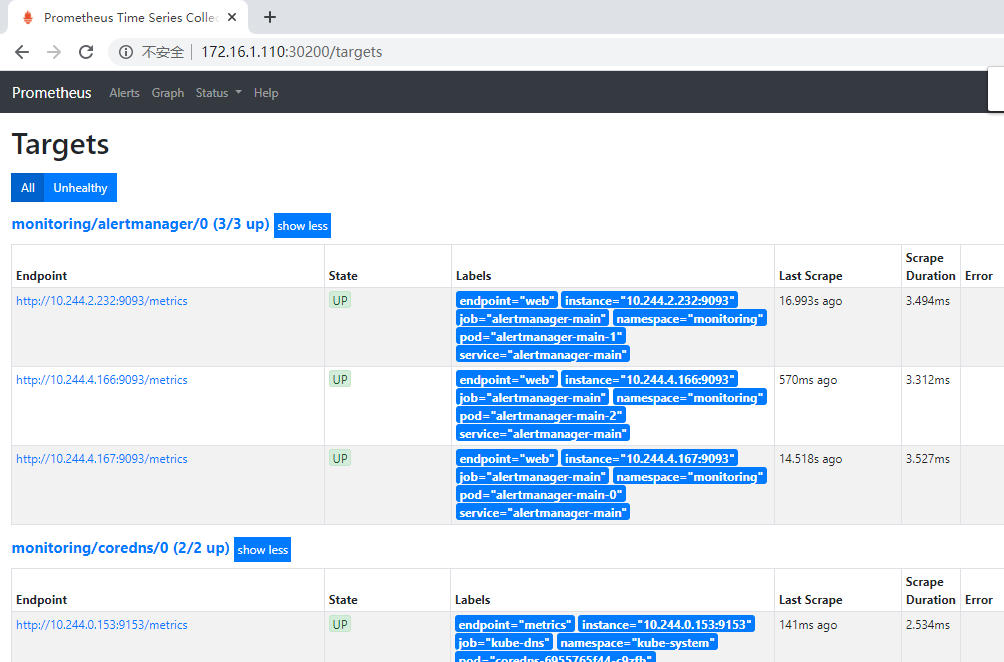

prometheus-service访问

访问地址如下:

http://172.16.1.110:30200/

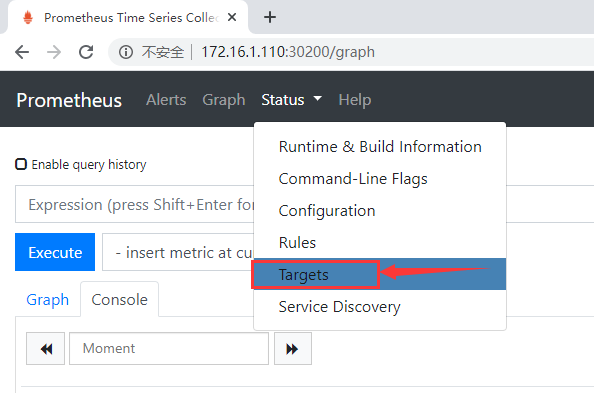

通过访问如下地址,可以看到prometheus已经成功连接上了k8s的apiserver。

http://172.16.1.110:30200/targets

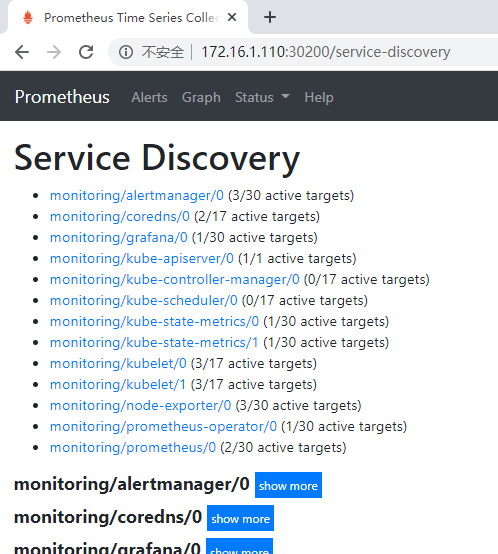

查看service-discovery

http://172.16.1.110:30200/service-discovery

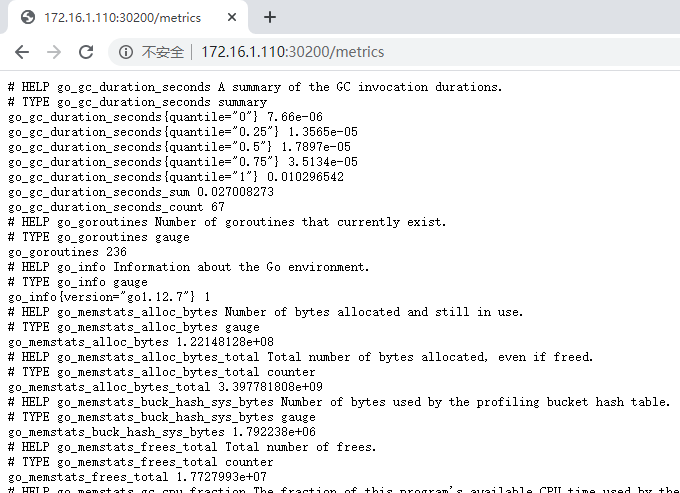

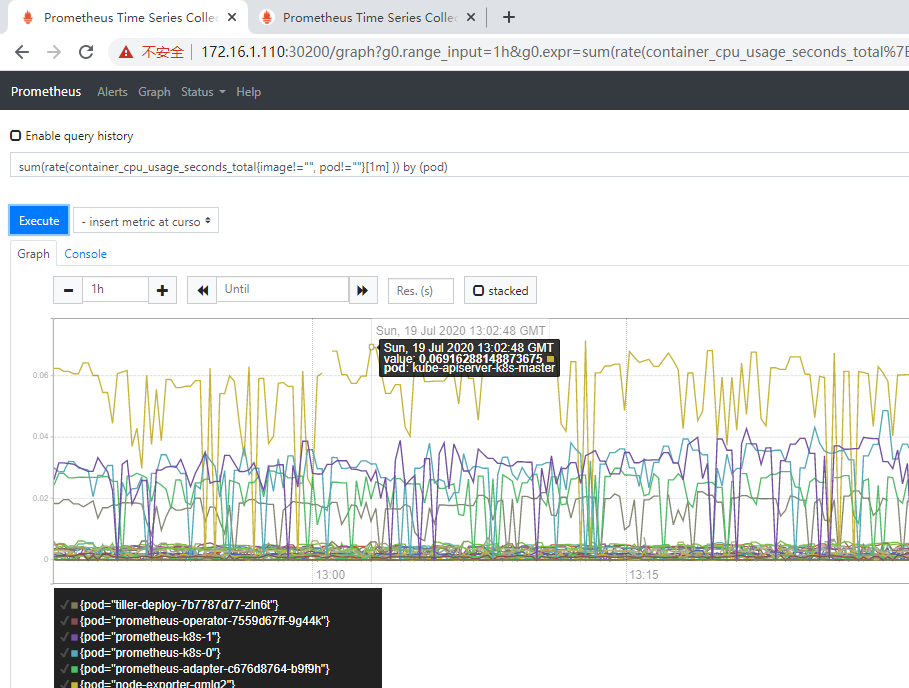

prometheus自己指标查看

http://172.16.1.110:30200/metrics

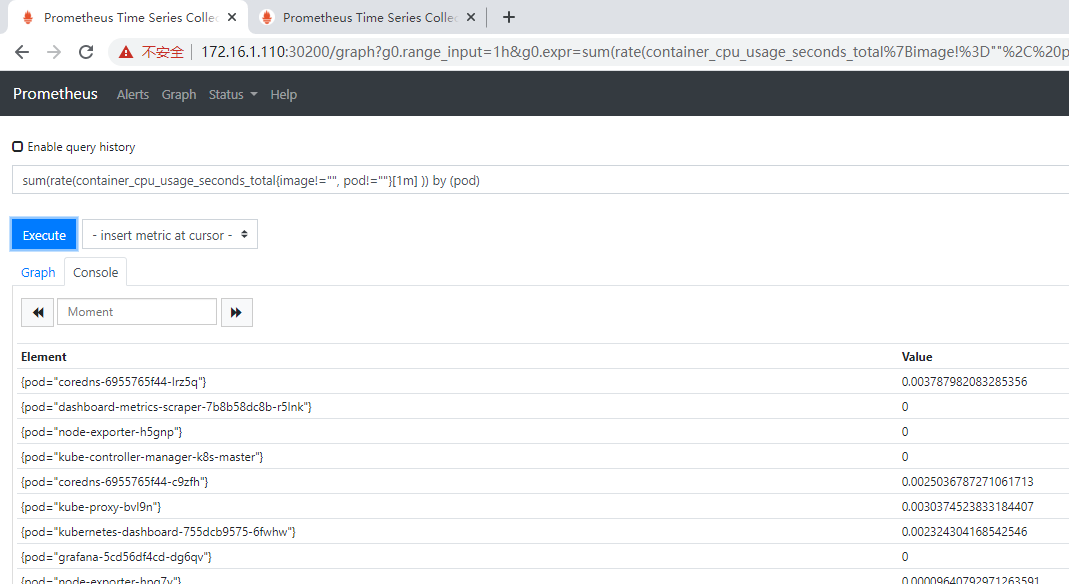

prometheus的WEB界面上提供了基本的查询,例如查询K8S集群中每个POD的CPU使用情况,可以使用如下查询条件查询:

1 # 直接使用 container_cpu_usage_seconds_total 可以看见有哪些字段信息 2 sum(rate(container_cpu_usage_seconds_total{image!="", pod!=""}[1m] )) by (pod)

列表页面

图形页面

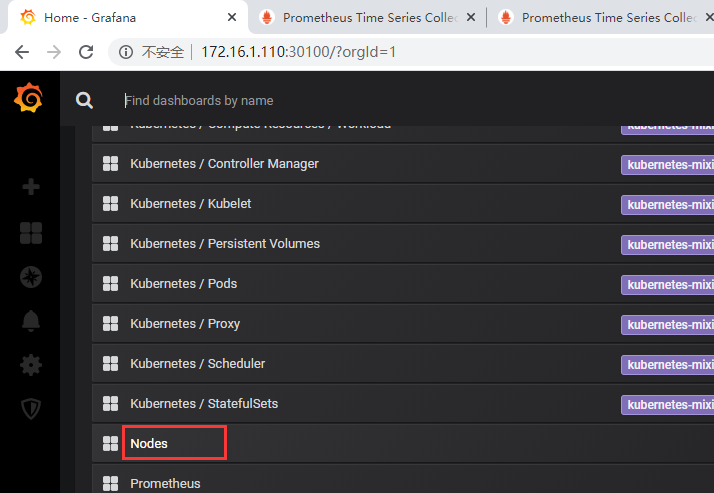

grafana-service访问

访问地址如下:

http://172.16.1.110:30100/

首次登录时账号密码默认为:admin/admin

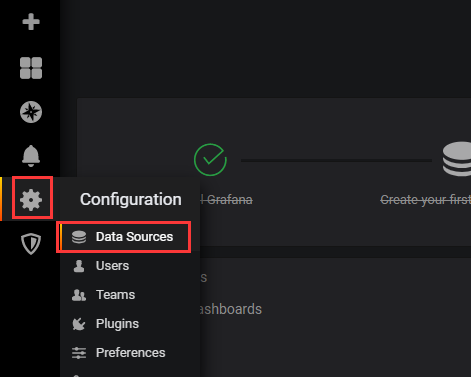

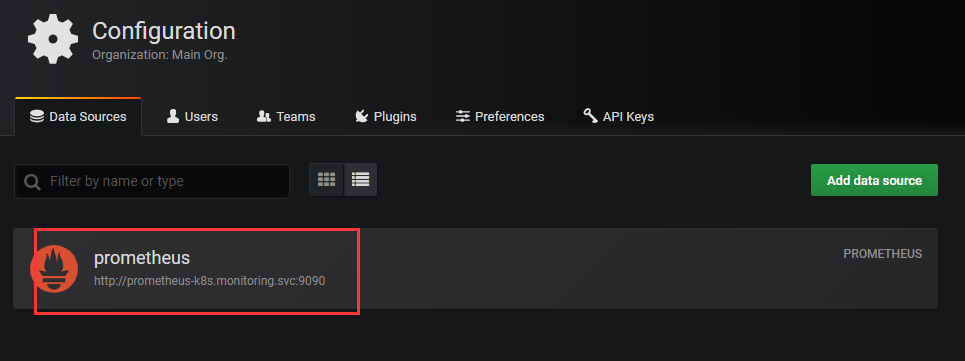

添加数据来源

得到如下页面

如上,数据来源默认是已经添加好了的

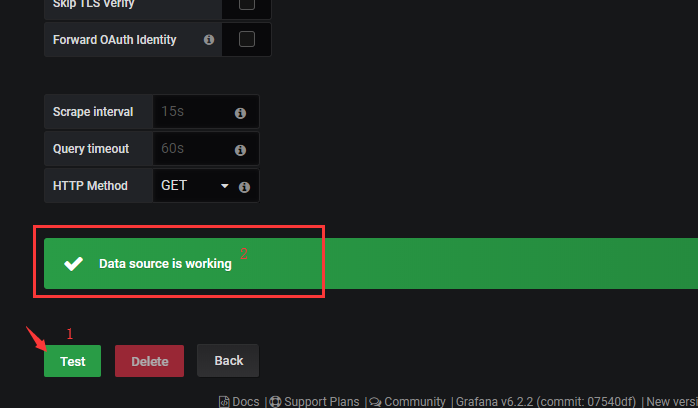

点击进入,拉到下面,再点击Test按钮,测验数据来源是否正常

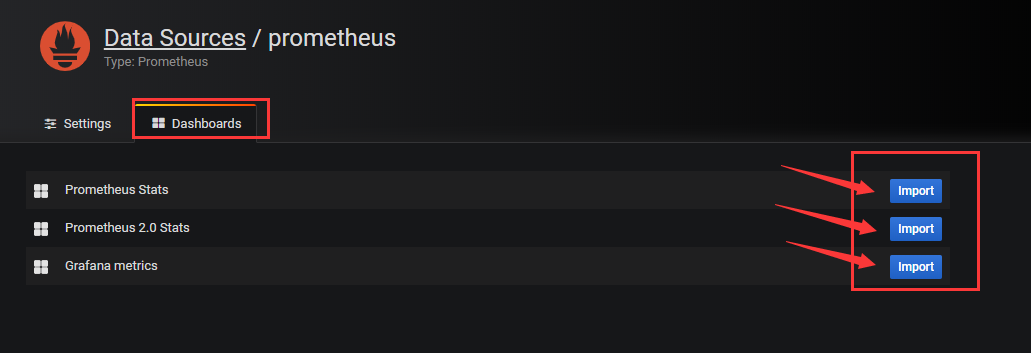

之后可导入一些模板

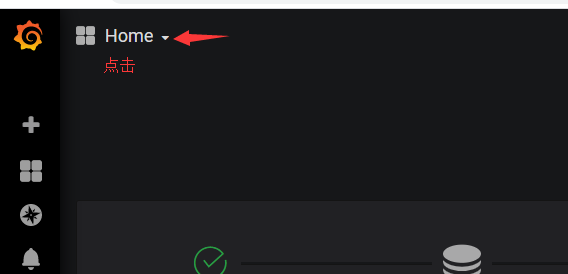

数据信息图像化查看

异常问题解决

如果 kubectl apply -f manifests/ 出现类似如下提示:

1 unable to recognize "manifests/alertmanager-alertmanager.yaml": no matches for kind "Alertmanager" in version "monitoring.coreos.com/v1" 2 unable to recognize "manifests/alertmanager-serviceMonitor.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 3 unable to recognize "manifests/grafana-serviceMonitor.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 4 unable to recognize "manifests/kube-state-metrics-serviceMonitor.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 5 unable to recognize "manifests/node-exporter-serviceMonitor.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 6 unable to recognize "manifests/prometheus-operator-serviceMonitor.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 7 unable to recognize "manifests/prometheus-prometheus.yaml": no matches for kind "Prometheus" in version "monitoring.coreos.com/v1" 8 unable to recognize "manifests/prometheus-rules.yaml": no matches for kind "PrometheusRule" in version "monitoring.coreos.com/v1" 9 unable to recognize "manifests/prometheus-serviceMonitor.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 10 unable to recognize "manifests/prometheus-serviceMonitorApiserver.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 11 unable to recognize "manifests/prometheus-serviceMonitorCoreDNS.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 12 unable to recognize "manifests/prometheus-serviceMonitorKubeControllerManager.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 13 unable to recognize "manifests/prometheus-serviceMonitorKubeScheduler.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1" 14 unable to recognize "manifests/prometheus-serviceMonitorKubelet.yaml": no matches for kind "ServiceMonitor" in version "monitoring.coreos.com/v1"

那么再次 kubectl apply -f manifests/ 即可;因为存在依赖。

但如果使用的是kube-prometheus:v0.3.0、v0.4.0、v0.5.0版本并出现了上面的提示【反复执行kubectl apply -f manifests/,但一直存在】,原因暂不清楚。

完毕!

———END———

如果觉得不错就关注下呗 (-^O^-) !